|

|||||||||||||

|

|

|||||||||||||

|

Regular Articles Vol. 14, No. 1, pp. 58–64, Jan. 2016. https://doi.org/10.53829/ntr201601ra1 Body-mind Sonification to Improve Players Actions in SportsAbstractIt is essential that we recognize both our own physical movements and mental condition if we are to achieve and improve goal-directed actions in sports. However, it is not easy to adequately understand one’s own body-mind state during complicated sports actions. A potentially better way to overcome this problem is to use auditory feedback because auditory perception has higher temporal resolution than visual perception, and it interferes very little with the performance of an action. In this article, we propose real-time auditory feedback techniques designed to sonify the temporal coordination of the body and the excessive activation caused by mental pressure when participating in sports based on surface electromyography and acceleration signals. Our aim was to determine when and how the body-mind behaves. We believe that these techniques will help players learn the skill needed for a desired action and will reveal a player’s physical and mental condition when playing sports. Keywords: auditory feedback, real time, motor learning 1. IntroductionIt is essential that we understand our own movement if we are to achieve and improve goal-directed actions in sports. However, it is not easy to adequately recognize the state of one’s own body during complicated movements involving multiple segments of the body, as when a golfer needs to coordinate various movements of the arms, trunk, and legs quickly and correctly when hitting a tee shot. In addition, the body is affected by a person’s mental condition; that is, the body and mind are not independent but are closely linked. Even a highly skilled player may not perform well under mental pressure. Therefore, it is very important to understand one’s own body-mind state during sports actions so as to perform satisfactorily in an actual sports game. NTT has long researched the neural mechanisms behind motor control, sensory perception, and emotional processing in humans. We have incorporated these findings and know-how into feedback techniques designed to convey certain key features of the body-mind state during actual sports actions. Specifically, we propose using auditory feedback to obtain the temporal structure of segmental motions and muscle activity using synthetic sound. Our aim is to make a player intuitively aware of when and how his/her body-mind behaves. 2. Use of auditory feedbackTo sense one’s own motor state (e.g., posture, motion, and muscle activity) during ongoing movement, we mainly depend on proprioception, which originates in the sensors in muscles and joints. However, proprioception offers only rough spatiotemporal resolution. Thus, many studies have proposed various ways of providing the motor state with a multimodal approach based on, for example, vision, audition, or haptics, to compensate for poor proprioceptive information [1]. Visual feedback has been the most widely used way of displaying body motion (e.g., looking at snapshots and video). However, visual feedback is also assumed to have some limitations. First, we cannot fully use vision during an ongoing action because vision is commonly used for other goals (e.g., looking at a target). Furthermore, vision is expected to be limited in terms of showing the temporal structure of segmental activities because of the insufficient temporal resolution of human visual perception, even though the very quick coordination of multiple body segments is a key feature in some sports. A potentially better way to overcome the above problems is to use auditory feedback. One reason for this is that unlike vision, human auditory perception is much less likely to interfere with performing an action, which is a benefit of providing feedback in real time. Auditory perception also has higher temporal resolution than vision. In fact, human beings often utilize auditory feedback to understand the temporal structure of motor behavior in daily life. A very familiar example is speech articulation. It is well known that speech articulators (e.g., the lips and tongue) have a very quick and complicated pattern, and auditory feedback plays a crucial role in controlling speech articulation [2]. We designed techniques for real-time sonification of the body-mind state to extend the human auditory system so that it can utilize motor control in the body in addition to speech. 3. Sonification of sports actionAlthough previous studies have already applied auditory feedback to sports, most of them have sonified behavioral outcomes (e.g., ski displacement [3]) or individual segmental activities (e.g., the timing of wrist and ankle movements in karate [4]). However, these kinds of feedback for individual outcomes and activities may be insufficient to utilize for motor recognition and learning because the spatiotemporal coordination of the body appears to be critical in terms of achieving a skillful movement in most sports. In contrast, our idea is to sonify body coordination, namely when and how a player controls his/her body, based on muscle activity and acceleration signals in multiple target segments. We expect our artificially provided auditory feedback to be integrated with original proprioceptive information during sports actions and that this integration will facilitate improvements in sports skills. 3.1 Sonifying segmental coordinationFor most sports, it is critical to coordinate multiple body segments sequentially. For instance, when pitching a baseball, a pitcher needs to operate his/her body segments in sequence from the leg to the arm, which is a well-known segmental kinetic chain [5], and a novice pitcher cannot perform this sequence well. Thus, we attempted to sonify such sequential patterns during pitching using acceleration signals recorded on the pitcher’s trunk, and then to apply it to pitching practice for novice participants. An example of the sonification of the pitching sequence of the trunk in a single novice participant is shown in Fig. 1. We attached wireless acceleration sensors (Trigno, Delsys) at three different positions (P1, P2, and P3) on the back of the trunk (Fig. 1(b)) and detected three axes of acceleration signals at a 300-Hz sampling rate. We used analog acceleration signals during pitching to calculate two acceleration components in real time, namely, trunk rotation (A1) and tilting (A2); we calculated A1 and A2 from the P2 minus P1 signals and P3 minus P1 signals, respectively (top two panels in Fig. 1(c)). Then a sound (S) is synthesized as S(t) = A1(t) S1 + A2(t) S2, (1) where S1 and S2 indicate band-limited pink noise with peak frequencies of 100 and 500 Hz, respectively. The lowest panel in Fig. 1(c) shows a spectrogram of the generated synthesized sound. Although this spectrogram contains two unclear frequency components, if the player performed a good trunk motion he/she would be able to hear the two components separately (see Fig. 1(d)). Time 0 approximately indicates the ball release timing of pitching.

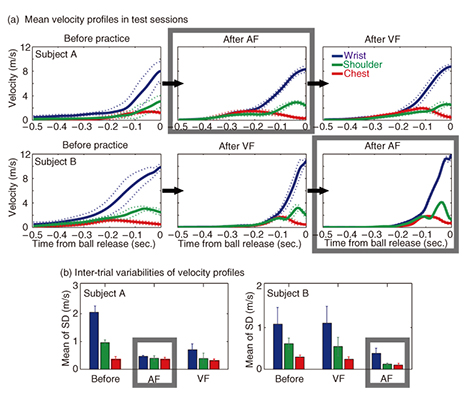

The above sonification (auditory feedback) was applied while two novice participants engaged in pitching practice that consisted of repeating 100 non-dominant arm pitches with a fixed step length and moderate effort (Fig. 1(a)). In particular, they focused on trunk rotation and tilting, which are targeted in the feedback information, because novices frequently exhibit poor trunk motion [6]. They also performed other practice trials using visual feedback (6-sec.-delayed video streaming) in a separate session for comparison with the effect of auditory feedback. The order of auditory and visual practice sessions was counterbalanced between the two participants. At the beginning of each practice session, either the sound (Fig. 1(d)) or a video of an expert’s pitch was provided as a practice target. Before and after each practice session, we measured as a test session the chest, shoulder, and wrist motions in 10 trials without any feedback using a motion capture system (Oqus 300, Qualisys). The mean velocity waveforms of three segments (chest, shoulder, and wrist) in each test session are shown in Fig. 2(a) for each participant. Both auditory (AF) and visual feedback (VF) practices improved the body sequence; namely, the sequential pattern from the chest to the wrist for peak velocity was clearer than in pre-practice trials. The inter-trial deviation of velocities for each test session is shown in Fig. 2(b). Interestingly, the auditory feedback showed less velocity deviation across test trials than the visual feedback. This result suggests that our real-time sonification for segmental coordination enhances the inter-trial repeatability of movements, compared with standard visual feedback.

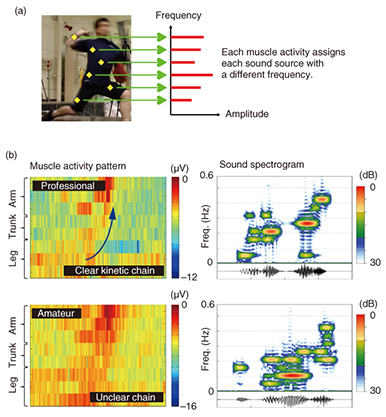

3.2 Sonifying coordination of muscle activityThe above sonification method expresses the kinematics of movement based on acceleration signals of the body. We propose here another sonification method for body state that describes the dynamics of movement, that is, the muscle activity pattern. Even if a player is made aware of a difference in motion by the sonification of kinematics, it is probably difficult to understand how to exert a force that will improve the movement because it is a theoretically ill-posed problem. Thus, we attempted to sonify the sequential muscle activity patterns, which more directly reflect the exerted force, based on multiple electromyography (EMG) signals from the trunk and the upper and lower limb muscles. An example of the sonification of the muscle activity pattern of pitching is shown in Fig. 3. The generated sound consisted of multiple sound sources whose fundamental frequencies were different for every muscle and whose amplitudes were varied in proportion to the EMG activity (Fig. 3(a)). An expert (former professional) pitcher almost always exhibited a clear kinetic chain of muscle activity from the leg to the arm muscle (upper-left panel in Fig. 3(b)), while an amateur pitcher did not (lower-left panel in Fig. 3(b)), indicating unnecessary muscle activation. Therefore, the expert’s sound is very rhythmical (upper-right panel in Fig. 3(b)), while the amateur’s is slurred (lower-right panel in Fig. 3(b)). This sonification can be described as an instrument type of sonification, and a player can hear the difference in the muscle activity pattern as a melody. Using this sonification, a player can move so as to make as purposeful a sound as possible.

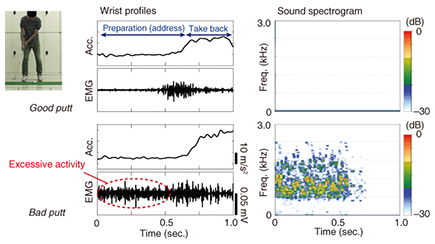

3.3 Sonification of excessive muscle activationSome sports players cannot perform well because they try too hard, such as when putting while playing golf. This corresponds to the unintentional excessive activation of the muscles probably caused by mental pressure. That is, such excessive muscle activation reflects the state of mind. This phenomenon is very common and very serious in an actual sports match, but it is often hard to notice it. Therefore, it would be useful to make a player aware of this potential for excessive muscle activation. An example of the sonification of overflow of muscle activity in the initial stages (address and take back) of putting in golf is shown in Fig. 4, based on EMG and acceleration signals of the wrist extensor muscles. The sound signal (S) consisted of a periodic waveform whose amplitude and fundamental frequency were varied in proportion to the short-term power of the EMG activity (E) divided by the acceleration (A) as follows. S(t) = E(t) / A(t). (2)

The sound mainly reflects the EMG activity when the acceleration was kept at almost zero. Therefore, the golfer could hear annoying sounds during the preparation (address) period when the muscle was activated more than necessary while the wrist moved less (lower panels in Fig. 4). Conversely, the golfer could not hear these sounds when the muscle was relaxed during preparation (upper panels in Fig. 4). Little sound was produced when the wrist was moving (take-back period) because the muscle activity and motion were coupled. This is a warning type of sonification, unlike the above sonification, and the player aims to produce as little sound as possible. 4. Future developmentsThis article explained some novel examples of sonification of the body-mind state in sports actions. This sonification has the potential benefit of making a player aware of the coordination of movements and of his/her condition under mental pressure. However, the information regarding the body-mind state and auditory ways to provide them will vary according to the objectives and the skill level of the player. For example, the sonification of a segmental sequence (Fig. 1) would be more useful in helping a novice player to acquire the basic temporal pattern of a target action, while an instrument type of sonification of muscle activity (Fig. 3) may be more useful in helping an intermediate player to improve, rather than acquire, a given movement. Furthermore, it is likely that the sonification of excessive muscle activation (Fig. 4) is beneficial in terms of recognizing one’s own mental state in an actual sports game. We should pay attention to other elements of sonification. For instance, it is critical to determine what to provide in sonification, namely, the key essence or the knack of a target action, before applying our sonification. It is also important to combine it with other techniques to effectively improve a player’s performance. It would be useful, for example, to combine it with visual feedback to characterize the spatial structure of an action, such as the form, while it seems more appropriate to combine it with auditory feedback to characterize the temporal structure of an action. Additionally, a wearable device such as the hitoe fabric bioelectrode developed by Toray and NTT would make the sonification application easy to use for players and could potentially record EMG signals during the sports action. Further research is needed to develop effective ways to enable a player to learn the skills needed for a desired action and to reveal a player’s condition when playing sports. References

|

|||||||||||||